- Blog

- Free alternatives to franklin gothic font

- How to open a maxtor personal storage 3200

- Make a clickable check box word

- Aptoide for pc download

- James bond 007 nightfire pc servers list

- The perfect balance game

- Docker network ipam

- Vaaranam aayiram songs download tamilwire

- Automatically add subtitles to video online free

- Miscroft word cursive fonts

- Unfill eraser in corel draw 2018

- Glee season 4 episode 1 recap tumblr

- Hello neighbor alpha 4 rating

- #DOCKER NETWORK IPAM HOW TO#

- #DOCKER NETWORK IPAM DRIVER#

- #DOCKER NETWORK IPAM MAC#

- #DOCKER NETWORK IPAM WINDOWS#

#DOCKER NETWORK IPAM HOW TO#

L2bridge networks are highly programmable More details on how to configure l2bridge can be found here. In configuration 2 users will need to add a endpoint on the host network compartment that acts as a gateway and configure routing capabilities for the designated prefix.

#DOCKER NETWORK IPAM MAC#

In datacenters, this helps alleviate the stress on switches having to learn MAC addresses of sometimes short-lived containers. In l2bridge, container network traffic will have the same MAC address as the host due to Layer-2 address translation (MAC re-write) operation on ingress and egress.

#DOCKER NETWORK IPAM DRIVER#

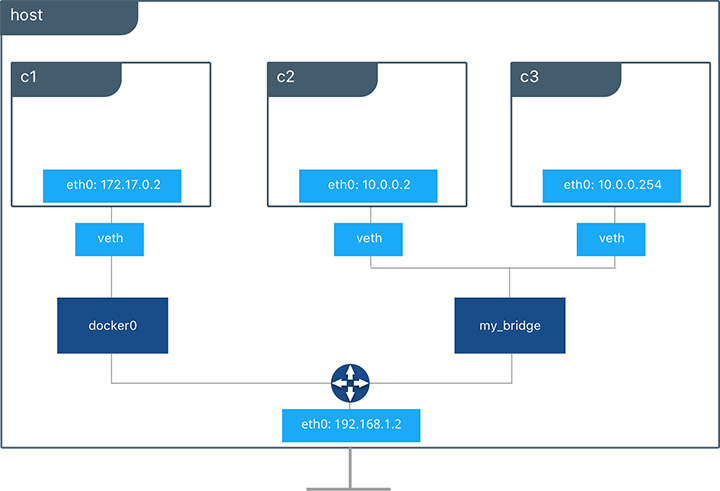

To create a new overlay network with subnet 10.244.0.0/24, DNS server 168.63.129.16, and VSID 4096: docker network create -d "overlay" -attachable -subnet "10.244.0.0/24" -o .dnsservers="168.63.129.16" -o .overlay.vxlanid_list="4096" my_overlayĬontainers attached to a network created with the 'l2bridge' driver will be connected to the physical network through an external Hyper-V switch. It also means that ICMP-based tools such as ping or Test-NetConnection should be configured using their TCP/UDP options in debugging situations. This means that a given container receives 1 IP address.

#DOCKER NETWORK IPAM WINDOWS#

On Windows Server 2019 and above, overlay networks created by Docker Swarm leverage VFP NAT rules for outbound connectivity. Requires: On Windows Server 2016, this requires KB4015217.

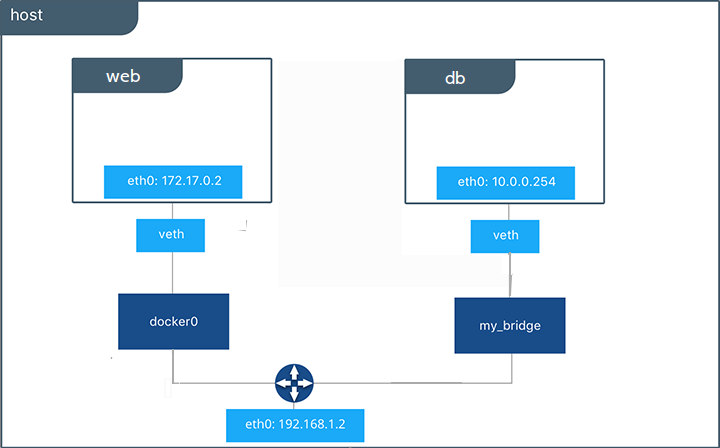

Requires: On Windows Server 2019, this requires KB4489899. Requires: Make sure your environment satisfies these required prerequisites for creating overlay networks. The overlay network driver uses VXLAN encapsulation to achieve network traffic isolation between tenant container networks and enables re-using IP addresses across overlay networks. Each overlay network is created with its own IP subnet, defined by a private IP prefix. Popularly used by containers orchestrators such as Docker Swarm and Kubernetes, containers attached to an overlay network can communicate with other containers attached to the same network across multiple container hosts. Requires: When this mode is used in a virtualization scenario (container host is a VM) MAC address spoofing is required. The simplified, relevant part of nginx.Due to the following requirement, connecting your container hosts over a transparent network is not supported on Azure VMs. The simplified docker-compose.yml is with external network created with docker network create -gateway 10.5.0.1 -subnet 10.5.0.0/16 custom_bridge: version: "3.5" It seems to be reasonable to use custom bridge network mode for the Docker network + forwarding from the Ubuntu Server physical IP address to the IP address of the nginx container in the custom bridge network. Here the IP addresses of the Ubuntu Desktop and the Ubuntu Server are assigned statically (another usual configuration during development would be dynamically assigned IP addresses via DHCP from a router in a LAN). To illustrate the question refer to this diagram ( draw.io image with embedded diagram data) showing a typical network setup of IoT applications during development:Īs a developer I want to be able to access the application (here: Django, but could be any other backend framework as well) running inside a Docker container on an Ubuntu Server from another machine (Ubuntu Desktop). cause of Support IPAM gateway in version 3.x. setting up the network with docker-compose instead of plain docker there is additional confusion e.g. This question seems to be quite common but I’ve never found a satisfactory answer to it.